How to Choose the Right LLM: A Developer’s Guide

April 13, 2026

The sheer volume of Large Language Models (LLMs) available today can be overwhelming. The decision you make when selecting a model will dictate not only the accuracy of its outputs but also the operational costs and latency of your infrastructure.

While proprietary SaaS-based APIs are excellent for rapid prototyping, many production-grade architectures require the full control, privacy, and flexibility that only open-source models can offer. Below, we break down how to effectively evaluate these options and integrate them into your development workflow.

1. Evaluating the Model Ecosystem

Before integrating a model into your stack, it is crucial to measure its actual capabilities against the specific needs of your system:

Artificial Analysis: This platform allows you to compare the entire landscape of models, highlighting the tradeoff between "intelligence" (based on benchmarks like MMLU Pro) and inference cost. If you are scaling up to millions of queries for a simple task, you likely don't need to pay the premium for a PhD-level reasoning model.

LMSYS Chatbot Arena: Because traditional benchmarks can sometimes be reverse-engineered by models, this tool from UC Berkeley relies on over a million blind user votes. It is the best way to get a realistic "vibe score" regarding a model's abilities in reasoning, math, and writing based on what the general AI community actually prefers.

Open LLM Leaderboard: Ideal for developers looking for open-source foundation models or fine-tuned versions on Hugging Face. It provides specific metrics and filters, which are invaluable if you are constrained by your GPU, want to run the model locally, or plan to do real-time inferencing on an edge device.

2. Local Testing and RAG Implementation

Once you've selected a promising model (for example, a model from the Granite family), the next step is to test it locally.

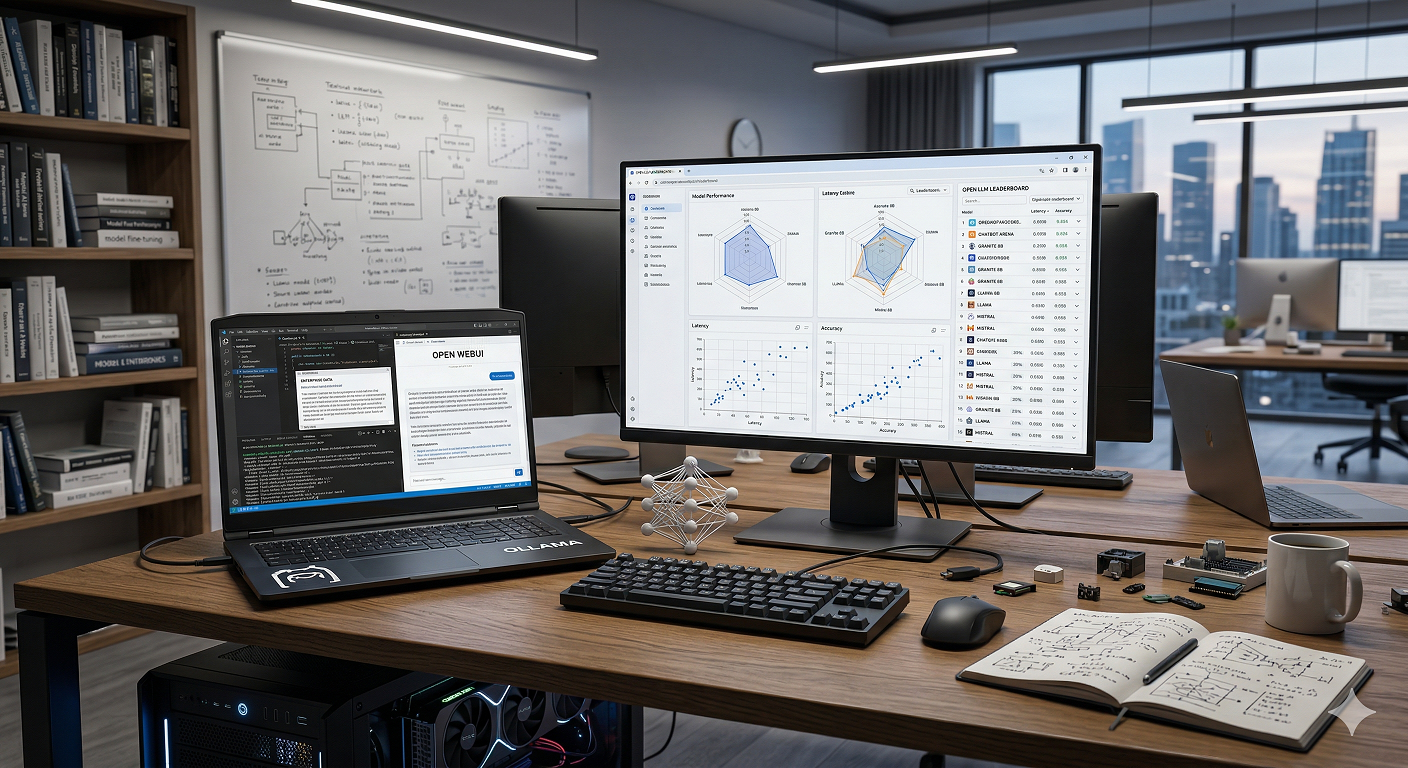

Local Execution with Ollama: Ollama is a popular developer tool that enables you to run your own LLMs, vision models, and embedding models directly on your machine. By using quantized (compressed and optimized) models, you can perform fast inferences locally without relying on the cloud or exposing sensitive data.

Retrieval-Augmented Generation (RAG) with Custom Data: To make a model truly useful for your specific domain, you need to connect it to your own data. By pairing a local model via Ollama with an open-source UI like Open WebUI, a vector database, and an embedding model, you can build a robust RAG pipeline. This allows you to feed the model enterprise data it wasn't originally trained on, fetching specific context and providing clear citations to ensure a single source of truth.

3. AI Coding Assistants in Your IDE

The utility of local LLMs extends right into your code editor. Instead of relying on external SaaS offerings, you can use a single model across multiple programming languages directly in your IDE.

By installing an open-source extension like Continue in VS Code or IntelliJ, you can connect it to your local Ollama instance. This setup allows you to chat with your codebase, ask the AI to explain complex files, suggest refactoring, or even generate inline documentation (like JavaDocs) in real-time.

Conclusion

At the end of the day, model selection should always be driven by your specific use case. By mastering evaluation tools and setting up a solid local testing environment with RAG capabilities, you can build smarter, more cost-effective, and secure AI applications.

Blog

Artificial Intelligence for elevate human talent

AI Agents Best Practices: Monitoring, Governance, and Optimization

How AI Agents are Transforming Human Resources

Start your Project

Tell us about your needs and how we can help you.