AI Agents Best Practices: Monitoring, Governance, and Optimization

March 19, 2026

By the year 2028, it is estimated that one-third of all Gen AI interactions will involve autonomous agents. Unlike traditional software, these agents do not just execute commands; they understand intent, plan actions, and learn as they go. However, their dynamic and non-deterministic nature introduces unique challenges that require a rigorous evaluation strategy before deployment.

The Challenge of Agent Dynamics

Consider an agent designed to help customers find their dream home. This system utilizes tools such as calendars, database searches, and mortgage calculation functions. Because it manages memory and makes real-time decisions, many things can go wrong: from adopting an inappropriate tone to failing to react properly to partial information from a client.

Critical Steps for Evaluation and Optimization

Defining Metrics: It is fundamental to establish performance indicators (accuracy, latency, task completion rate) and regulatory compliance metrics (bias, toxicity, and source attribution).

Adversarial Robustness: You must prepare the agent to identify fraud or manipulation attempts, ensuring it behaves predictably even when facing malicious users.

Data Preparation and Simulation: Create scenarios that reflect the real world, including all possible routes the agent might take.

Implementing "LLM as a Judge": A popular technique involves using a high-capacity language model to evaluate whether your agent's outputs and actions are correct and safe.

Tool Integration Testing: Ensure that external function calls and integrated tools work seamlessly for the end user.

The Continuous Improvement Cycle

Building agents is an iterative process. After assessing outcomes, it is necessary to tweak prompts, optimize flows, and sometimes sacrifice one metric (like latency) to improve another (like accuracy). Finally, production monitoring is vital to feed the development cycle and build increasingly robust versions.

Blog

Custom Software vs. Off-the-Shelf Solutions: What to Choose?

Private Agentic AI: Implementing Intelligent Flows Without Compromising Data

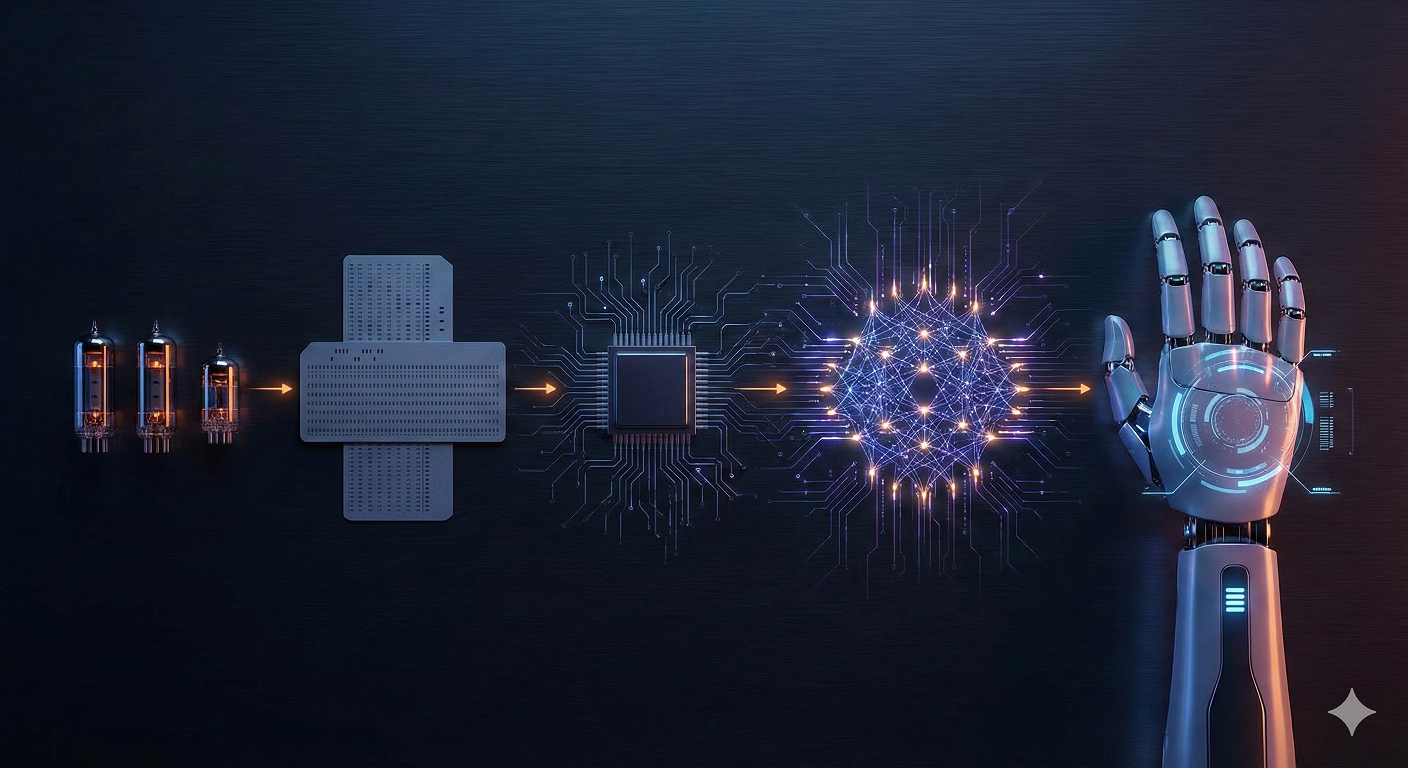

A Brief History of Artificial Intelligence: From the Turing Test to Agentic AI

Start your Project

Tell us about your needs and how we can help you.